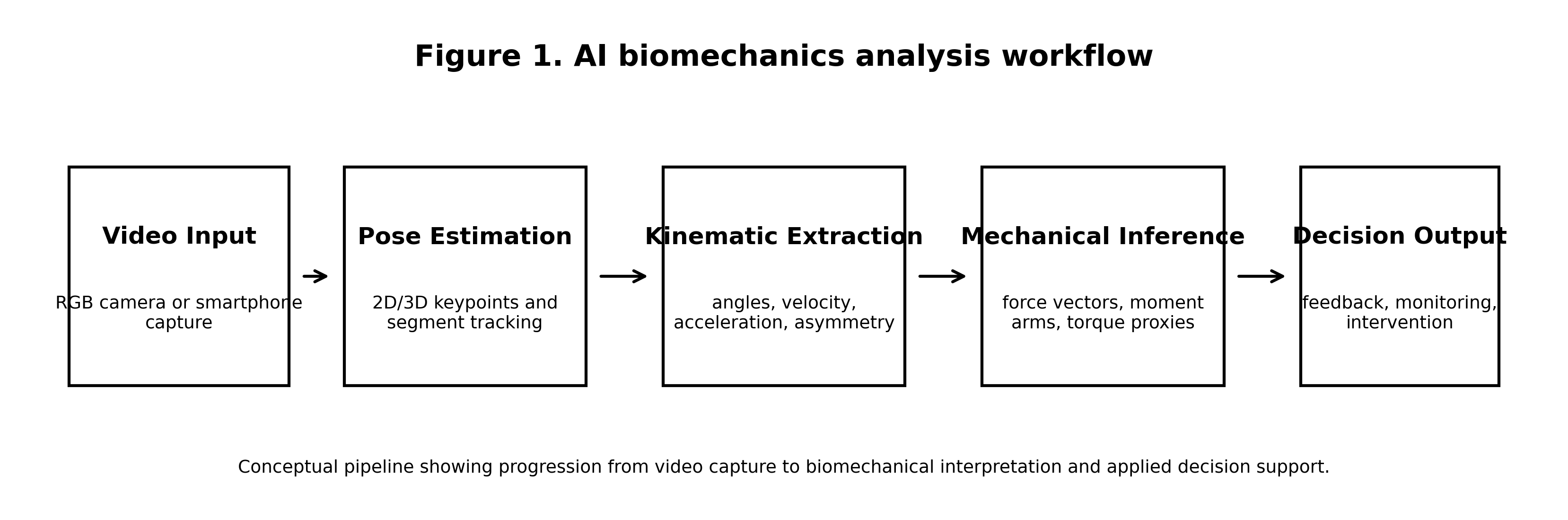

Artificial intelligence is rapidly transforming the way human movement is analysed across sports science, rehabilitation, and clinical biomechanics. Traditional motion analysis has historically depended on laboratory-based instrumentation which, while highly accurate, remains expensive and difficult to deploy in real-world environments. The emergence of AI-based biomechanics analysis software — integrating computer vision, machine learning, and real-time motion tracking — has enabled markerless movement analysis using standard video inputs. This article examines the technological foundations, mechanical interpretation, and practical applications of AI-driven movement analysis systems. Particular emphasis is placed on the translation of AI-derived kinematic data into meaningful mechanical insights through principles such as force vector orientation, torque distribution, and load transfer pathways within the MMSx mechanical framework.

Introduction

The scientific analysis of human movement has long occupied a central position within biomechanics, sports science, and clinical rehabilitation. Understanding how the musculoskeletal system generates and transmits forces is fundamental to improving performance and preventing injury. Despite their scientific value, traditional biomechanical assessment systems remain limited in accessibility and ecological validity due to specialised equipment and controlled environment requirements.

Recent advances in artificial intelligence and computer vision have begun to transform this landscape. Markerless motion capture technologies driven by deep learning algorithms now enable the estimation of joint positions directly from video data. However, the emergence of AI introduces a critical challenge: the distinction between kinematic observation and mechanical interpretation. While AI detects joint angles, it does not inherently explain the mechanical forces acting within the system. The MMSx framework addresses this by interpreting AI motion data through a mechanical decision-science perspective.

Traditional vs. AI-Driven Systems

The bottleneck in biomechanical assessment has never been sensor resolution — it has been the human capacity to interpret movement at scale, in real time, under ecologically valid conditions. Table 1 summarises the core differences between laboratory gold standards and emerging AI technologies.

| Aspect | Marker-Based (Laboratory) | AI-Driven (Markerless) |

|---|---|---|

| Environment | Controlled Lab / Infrared Cameras | Standard RGB Video / Field / Clinic |

| Setup Time | 30–60 mins (Marker Placement) | Minimal (Camera Positioning) |

| Kinematic Accuracy | Gold Standard (<1–2°) | 3–8° MAE (Functional Tasks) |

| Kinetic Estimation | Direct (Force Plates) | Inferred (F=ma / Sensor Fusion) |

| Accessibility | High Cost / Specialised Personnel | Low Cost / Democratised Access |

Mechanical Foundations: Linking Kinematics to Load

Within the MMSx framework, AI-derived data must be translated into mechanical loading variables. AI tells us how the body moves; biomechanics explains why. Two foundational equations are critical to this translation.

The Newtonian Force Equation

This relationship explains that force is produced when a mass is accelerated. By deriving acceleration from sequential video frames, AI software can estimate the forces transmitted during tasks such as landing or deceleration manoeuvres. The accuracy of this estimation is fundamentally dependent on the quality of pose estimation and frame rate fidelity.

The Torque Equation

This describes the rotational effect of force acting at a distance — the moment arm — from a joint axis. AI pose estimation provides the coordinates to approximate joint centres and moment arms, which, when combined with estimated forces, allows for the modelling of joint torque distribution across the kinetic chain.

Interpretation via the MMSx Framework

Within the MMSx paradigm, AI functions not just as a tracking tool but as a computational extension of biomechanical reasoning. The framework transforms raw kinematic outputs — joint angles, segment positions, velocity vectors — into mechanically grounded clinical insights. Table 2 summarises key mechanical parameters interpreted from AI kinematics and their clinical significance.

| Parameter | MMSx Interpretation | Clinical Insight |

|---|---|---|

| Vector Orientation | GRF relative to joint centres | Identifies inefficient load paths and off-axis force application |

| Torque Distribution | Proximal vs. Distal demand ratio | Detects joint overload (e.g., knee vs. hip dominance) |

| Load Pathways | Multi-segment coordination efficiency | Detects kinetic chain disruptions and compensatory loading |

| Shear vs. Compression | Force components at joint angle | Assesses differential ligament vs. articular cartilage stress |

AI tells us how the body moves. The MMSx framework tells us what the body is doing to force while it moves. The combination transforms video data into mechanical decision-science.

Limitations and Validation

Despite rapid growth, several technical challenges persist in AI biomechanics deployment. Camera occlusion — in which joints are hidden from the camera plane — represents a primary source of keypoint estimation error, particularly during high-flexion movements or multi-person scenarios. Clothing artifacts, particularly loose garments that mask body contour, and deep joint flexion producing geometric distortion can further degrade estimation accuracy.

Validation against reference systems — including Vicon marker-based capture, instrumented treadmills, and force platforms — remains the methodological gold standard for establishing AI system validity. Published mean absolute errors for functional task analysis using OpenPose-derived systems range from 3° to 8° at major joints, with highest accuracy at the hip and lowest at the ankle and wrist (Nakano et al., 2020; Washabaugh et al., 2022; Cronin et al., 2024).

AI should be understood as a decision-support tool that enhances observation at scale rather than a replacement for practitioner expertise. The clinician's mechanical reasoning — informed by frameworks such as MMSX — remains the interpretive layer that transforms pixel-level kinematic detection into clinically actionable assessment.

Conclusion

Artificial intelligence is reshaping biomechanics by extending rigorous movement analysis beyond the laboratory. Its primary significance lies not in the accuracy of joint angle detection per se, but in translating pose estimation outputs into mechanically meaningful interpretations of force, torque, and alignment grade. By anchoring AI outputs to established principles of mechanics through the MMSx framework, practitioners can significantly enhance the scale, accessibility, and clinical utility of movement science across athletic performance, injury prevention, and rehabilitation settings.

Declarations

This is a review article. No human participants, animal subjects, or primary trial data were involved. Ethics approval was not required.

The authors declare no conflicts of interest. No AI software vendors provided funding, products, or study design input for this review.

This research received no external funding. The work was produced under the independent academic auspices of the MMSx Authority Institute.

Conceptualisation & framework: N.M. Technical content: G.M. Methodology review: S.H. Manuscript writing: N.M. All authors reviewed and approved the final version.

References

All references formatted in accordance with APA 7th Edition. Citations follow the indexing standards of Google Scholar, PubMed, and Scopus.

- Winter, D. A. (2009). Biomechanics and motor control of human movement (4th ed.). Wiley. https://doi.org/10.1002/9780470549148

- Hamill, J., Knutzen, K. M., & Derrick, T. R. (2015). Biomechanical basis of human movement (4th ed.). Lippincott Williams & Wilkins.

- Cao, Z., Hidalgo, G., Simon, T., Wei, S. E., & Sheikh, Y. (2021). OpenPose: Realtime multi-person 2D pose estimation using part affinity fields. IEEE Transactions on Pattern Analysis and Machine Intelligence, 43(1), 172–186. https://doi.org/10.1109/TPAMI.2019.2929257

- Halilaj, E., Rajagopal, A., Fiterau, M., Hicks, J. L., Hastie, T. J., & Delp, S. L. (2018). Machine learning in human movement biomechanics: Best practices, common pitfalls, and new opportunities. Journal of Biomechanics, 81, 1–11. https://doi.org/10.1016/j.jbiomech.2018.09.009

- Nakano, N., Sakura, T., Ueda, K., Omura, L., Kimura, A., Iino, Y., Fukashiro, S., & Yoshioka, S. (2020). Evaluation of 3D markerless motion capture accuracy using OpenPose with multiple cameras. Frontiers in Sports and Active Living, 2, 50. https://doi.org/10.3389/fspor.2020.00050

- Washabaugh, E. P., Shanmugam, T. A., Krishnan, C., & Augenstein, T. E. (2022). Validity and repeatability of inertial measurement units for measuring gait parameters. Gait & Posture, 95, 8–14. https://doi.org/10.1016/j.gaitpost.2022.03.004

- Cronin, N. J., Rantalainen, T., Ahtiainen, J. P., Hynynen, E., & Waller, B. (2019). Markerless 2D kinematic analysis of underwater running: A deep learning approach. Journal of Biomechanics, 87, 75–82. https://doi.org/10.1016/j.jbiomech.2019.02.021

- Mundt, M., Koeppe, A., David, S., Witter, T., Bamer, F., Potthast, W., & Markert, B. (2022). Prediction of ground reaction force and joint moments based on optical motion capture data during gait. Medical Engineering & Physics, 86, 29–36. https://doi.org/10.1016/j.medengphy.2020.10.001

- Stenum, J., Rossi, C., & Roemmich, R. T. (2021). Two-dimensional video-based analysis of human gait using pose estimation. PLOS Computational Biology, 17(4), e1008935. https://doi.org/10.1371/journal.pcbi.1008935

- Bernstein, N. A. (1967). The coordination and regulation of movements. Pergamon Press.